It's no secret that Firefox has been steadily losing ground over the past decade or so. Despite efforts to revitalize this once beloved titan of the internet, the market share just hasn't returned, and Mozilla's recent choices haven't been helping the cause. That being said, Mozilla hasn't given up, and after many false starts, it seems like current leadership is ready to give it a go at regaining ground.

The recently introduced built-in Firefox VPN feature is an example of this, as are the (admittedly controversial) AI-powered enhancements recently shipped in recent releases. But are these enough to give Firefox a real chance to claw its way back to the top, or at least make it relevant enough to survive?

Let's talk about it, and see where things might be headed for our favourite red panda.

Is Firefox really dying?

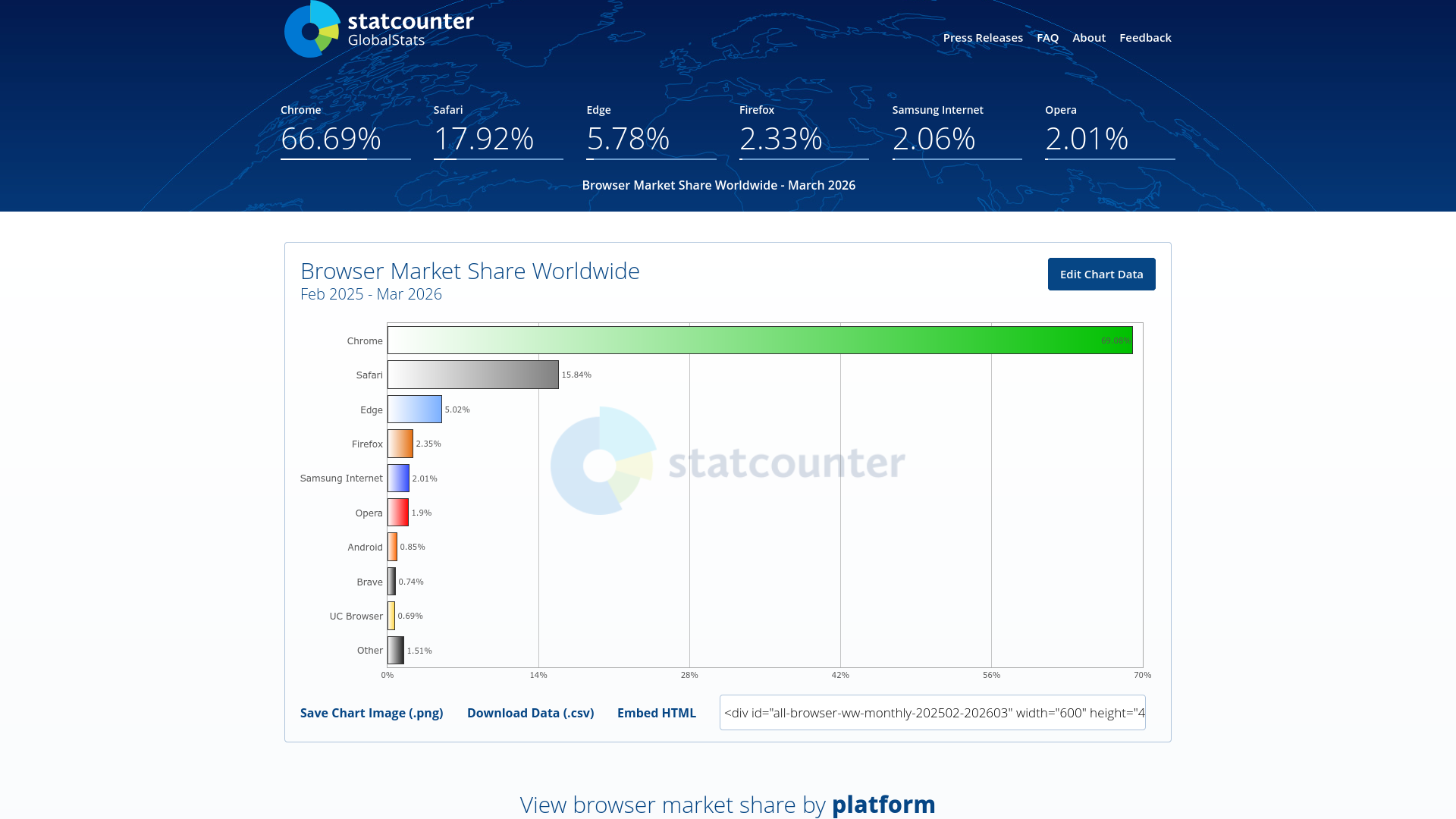

Since we’re asking whether Firefox can be resurrected, it shouldn’t come as a shock that, by the numbers, Firefox is not in a particularly good place. Since the launch of Google Chrome, Firefox has gradually, and then more rapidly, fallen from its former position to the point where it now accounts for just 2.29% of global browser market share, according to Statcounter. That’s down from 7.97% in 2016 (which is still quite minimal), a drop of roughly 5.7 percentage points in the last decade alone.

Of course, a low market share does not mean an open-source project is literally “dying”. But Firefox is not just a project. It is also a product, and as a product, it has an incentive not just to exist or survive, but to thrive. Right now, the long-term trend suggests it is doing neither especially well.

What happened to Firefox's popularity anyway?

It’s easy to snigger and say “Chrome happened, heh!” but that wouldn’t do the whole story justice. It’s unfair to say that the resignation of former Mozilla CEO Brendan Eich in 2014 and the subsequent creation of Brave is responsible for Firefox’s decline, even if that episode is sometimes cited as one more nail in the Firefox coffin.

Instead, the reality is a bit more complicated, and it’s worth paying attention to before we answer the questions posed by our overall premise.

For starters, Firefox has reinvented itself a bit too often in a relatively short timeframe, and unfortunately, these reinventions have at times blindsided loyal users. From Australis to Quantum/Photon, and later Proton, Mozilla has seemed to be in a relentless search for a new Firefox aesthetic. On the surface, no pun intended, this may not seem like a big deal, because after all, “a UI is just another coat of paint”, right?

The problem with change is friction

Every change is another experience for users to get used to, and adjusting to change brings friction. The more change, the more friction, and the more friction the greater the frustration. Eventually, users get tired and move on.

By contrast, Chrome and most of Firefox’s major competitors have remained comparatively stable in their core look and feel over time, which reduces the friction users feel when moving from one version to the next. Furthermore, Firefox lost its legacy extension system and full browser theming in 2017, and before that, the standout Panorama tab groups feature in 2016. You can see the Firefox 57 transition point in Mozilla’s own release notes.

Simply put, Firefox suffers from a war of its own attrition. So the question then becomes can its new features heal the scars the old wounds left behind?

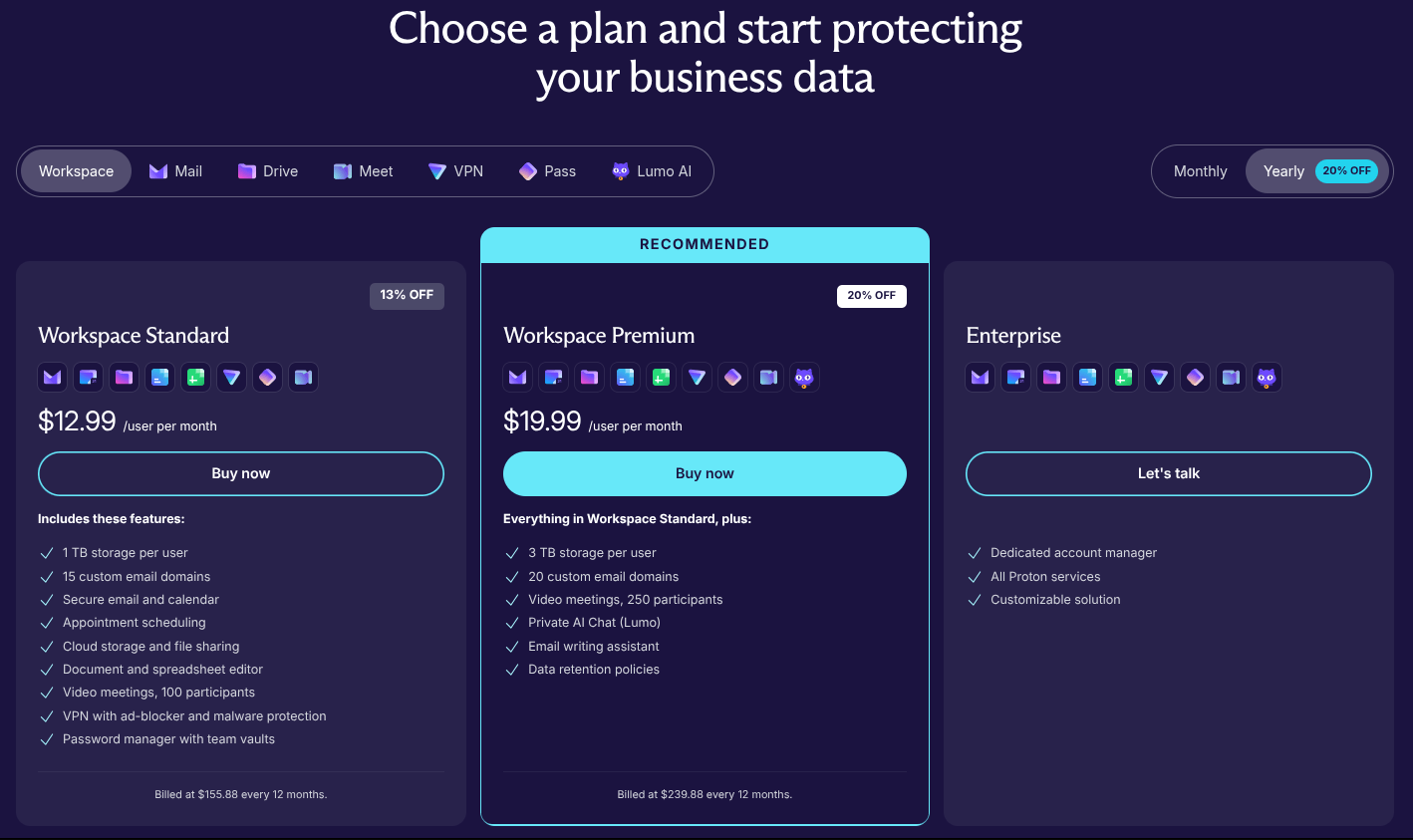

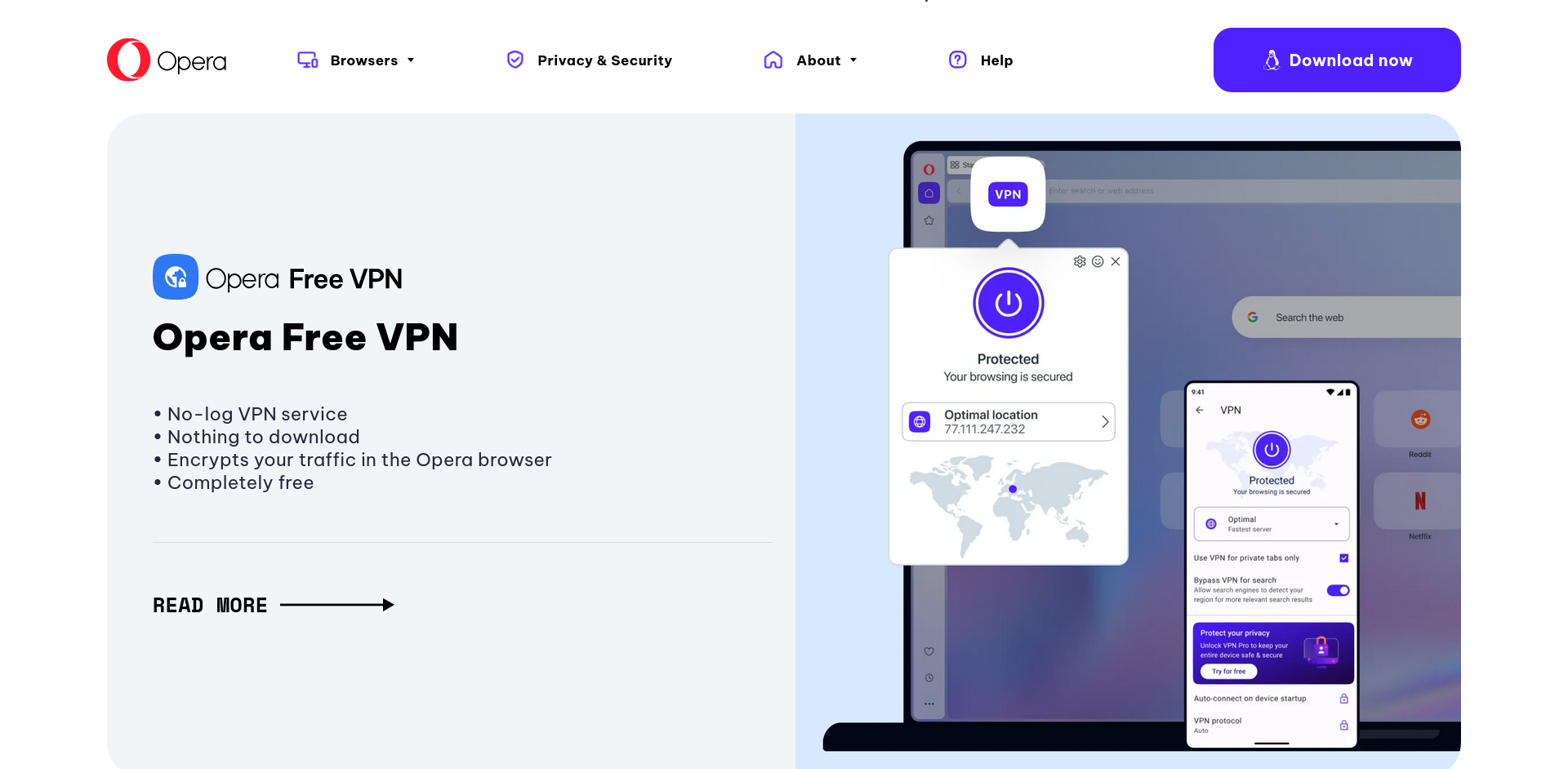

Why the new VPN matters, if they get it right

Of all the moves Mozilla has been making in Firefox recently, this one perhaps has the greatest potential to be the sleeper hit Firefox has needed for a long time. After all, Mozilla has long positioned itself as a champion of privacy and security, and Firefox still retains a stronger reputation for privacy than many of its mainstream rivals.

Unlike AI features, which many users may ignore, distrust, or actively avoid, built-in privacy tools solve a problem people already understand.

That said, Mozilla needs to be careful not to make some of the same obvious mistakes that have hurt other browsers in the past. Just as importantly, it needs to resist the temptation to keep this feature restricted to only a select few in the long run.

Don’t give us a glorified proxy

Opera tried this, and to my knowledge, it is still essentially that, despite carrying the name of a VPN. If Mozilla is serious about this effort, then it needs to make sure that what it is calling a VPN actually delivers on what the term implies.

If this is going to matter, it cannot feel like a half-step, a marketing hook, or a dressed-up proxy with a more fashionable label. It needs to be useful, absolutely trustworthy (a very hard sell), and accessible enough that ordinary users can feel the benefit without having to decode the fine print first.

It needs to be for everyone, or it shouldn’t exist at all

That stance may sound a little hardline, but it is the stance Firefox needs if Mozilla truly intends to make this feature matter on the global stage. A privacy feature cannot meaningfully strengthen Firefox’s position if large parts of the world are excluded from using it.

The world is not limited to the US, UK, Europe, and Canada. It never was. If Mozilla is going to introduce a feature like this, it needs to be available worldwide, or it risks sending the message that a large subset of highly connected users, many of whom also contribute to the open-source technologies that make these features possible, do not matter enough to be included. Mozilla, of all companies, needs to prove that this is not its position.

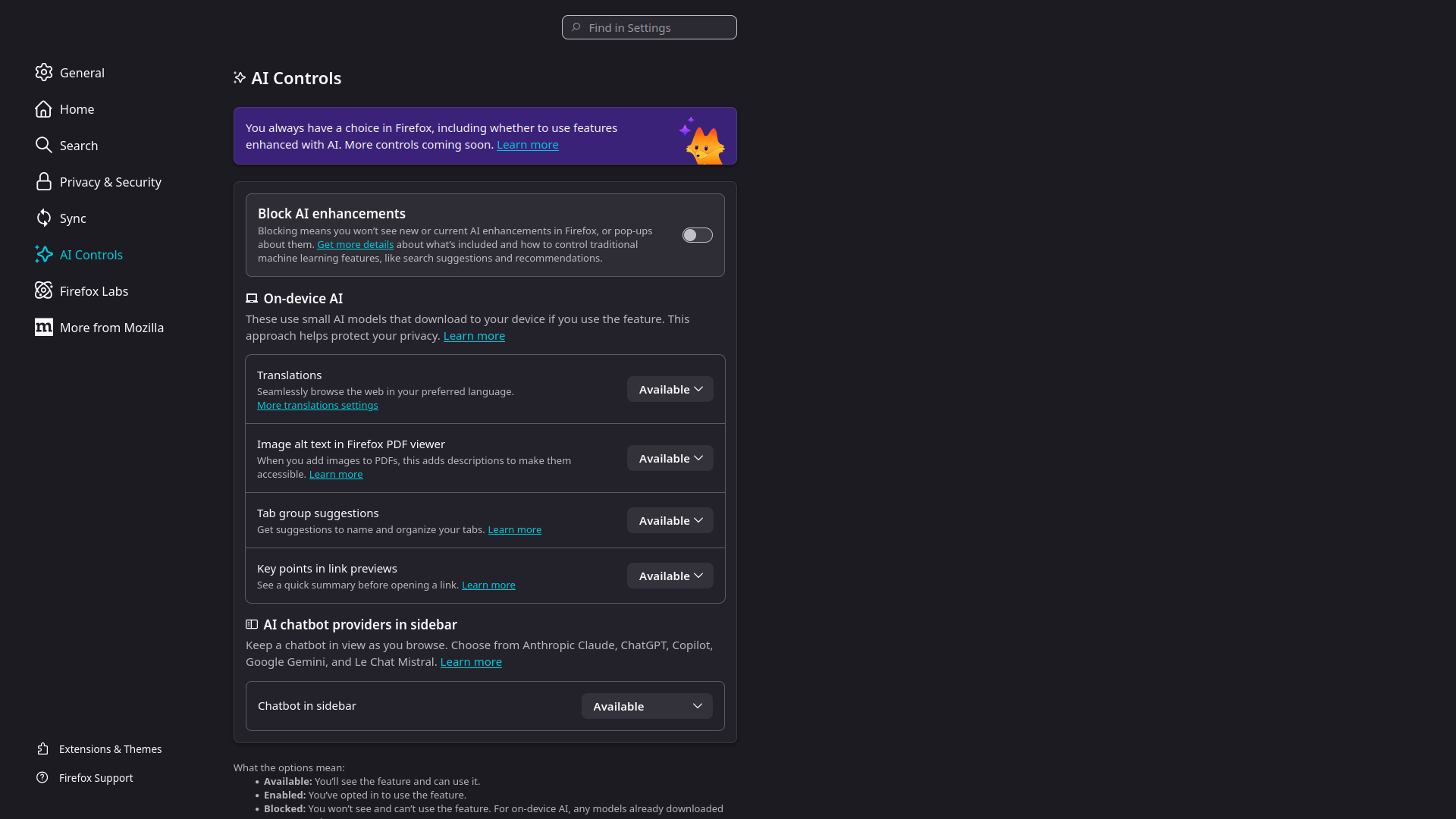

AI: Not for everyone, but maybe enough for some

It's important to understand the approach Mozilla is taking here, since this is an area where things often get framed through sensationalism rather than reality. Yes, Mozilla is adding AI features to Firefox, and at a fairly brisk pace. However, these features are still optional, though Mozilla choosing to make them opt-out rather than opt-in might leave a bad taste in some users' mouths. Mozilla’s current AI controls are part of that wider balancing act.

That being said, some users not only won't mind these features, but may sincerely expect them to be present in any modern browser, and be disappointed without them. After all, there's a very real market for the likes of Microsoft's Copilot and Google's Gemini: casual users who aren't too deeply concerned how something works so much as whether they can use it or not.

Striking the balance

The key here isn't so much about whether Mozilla/Firefox should abandon AI altogether. It's clearly a direction Mozilla is dead set on exploring, even as privacy concerns continue to dominate the conversation. The real trick is to find a way for these features to exist while also doing something genuinely useful.

Poor article summaries and gimmicky integrations are just not going to win many people over, certainly not in the long run. But on-device tools that provide translations, help users conduct better research, navigate their browsing history more intelligently, or just generally get real work done faster without sending their data off into the void? Now that's a story most people can confidently get behind.

That's where Mozilla may have a real opening. Sure, AI isn't likely to be the thing that single-handedly "saves" Firefox, even if done "right". Yet, if it's handled carefully, it could help Firefox feel current, capable, and competitive to the kinds of users who now expect these conveniences to exist.

Counterpoint: What about the competition? Is everyone doing it?

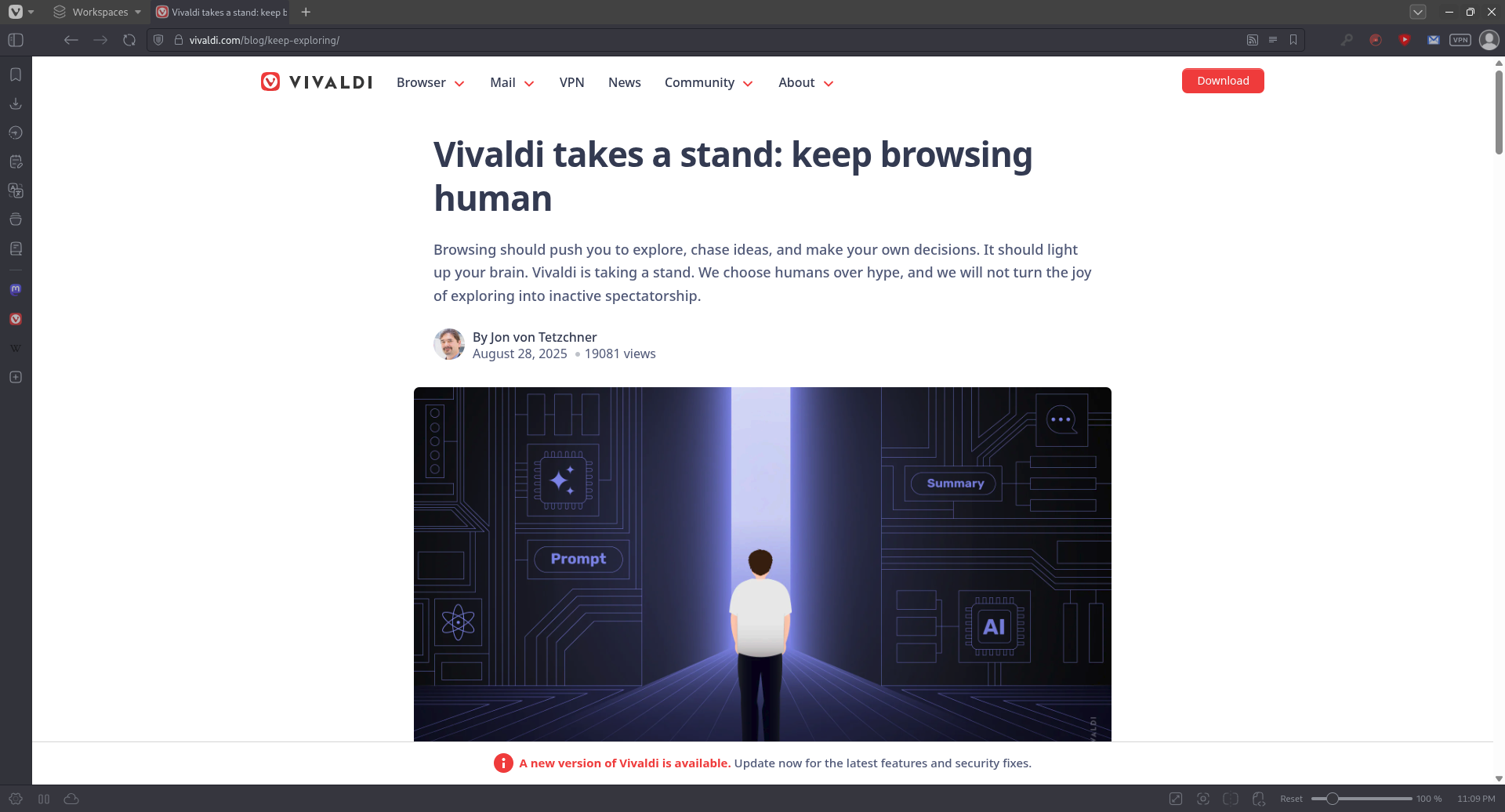

No, and if we're looking at benchmarks of success, this really matters. For example, Vivaldi, the "spiritual successor" to the pre-Chromium-clone Opera, has firmly chosen not to integrate generative AI features into the browser. They've been quite explicit about this stance with their "keep browsing human" messaging.

In a world where it seems every major browser vendor is diving in head-first, this is a bold decision that helps Vivaldi stand apart from a market increasingly saturated by the same talking points and "checklist features" that feel like mere buzzword copycatting. This is also one of the reasons why Firefox forks like Waterfox and others have continued to hold solid, faithful communities.

Truthfully, Firefox has often been chosen because it's not like the crowd: it's not Chrome, it's not a clone (it still uses its own Gecko engine), and it's the one major browser that has historically dared to remain not only independent but substantively different. So while some users won't mind a little assistance here and there, the Firefox faithful may be more likely to be the ones turned off by the "AI everywhere" trend that's taken over the internet. For those users, restraint can be a selling point in itself.

What this means for Firefox

What Mozilla is pursuing here is still quite the gamble. They're playing the fine line between the privacy-focused legacy of Firefox and the "assisted future" that the world is headed towards. It may look like the right way forward for some, but might very well be a death knell to others.

Mozilla may believe in striking a balance by keeping these features flexible, optional, and in some cases locally driven. The problem is that balance is hard to achieve, and even harder to effectively communicate.

So Firefox's real challenge isn't just adding new features. It's in convincing people that it still knows where to draw the line. If Mozilla gets that balance right, Firefox may come across as modern without feeling overstuffed. If they get it wrong, it risks alienating users who just wanted a browser with boundaries.

The secret benefit of drawing attention

It would be remiss of me to close out without addressing the one thing that this new strategy by Mozilla may be most succeeding at: getting us to talk about Firefox again. Sure, not all the talk around Mozilla's recent decisions has been positive, and if we're being fair, they have given us some reasons for pause. However, if there's one thing attention does well, it's getting people to see what all the fuss is about, even if they're otherwise not sold or even all that interested.

Maybe that's what Mozilla is angling for with Firefox after all - and if they can manage to stick the landing, all this increased attention and coverage might just be the key to getting new (and old) users to try this new flavour of Firefox ice cream and find that we like it.

Is it all enough?

Frankly, it's a bit too early to tell, though the reality is that trends can often be shifted by the most unexpected winds of change. No one expected Chromebooks to become a success, until they were. At one time, no one saw smartphones coming, now they're everywhere. What drove those trends? Tiny, seemingly innocuous factors, and simple, seemingly unimportant features. The same can happen with Firefox and its ambitions to recapture its position in the hearts and minds of users around the world. Could the new VPN and more, but cleverly handled AI integration be the secret sauce to push things over the line?

Only time will tell, but maybe, there's a chance this time.

from It's FOSS https://ift.tt/i81Zoge

via IFTTT